They're still there: you can use turn.state.shutdown(), which enqueues

a message for eventual actor shutdown. But it's better to use

turn.stop_root(), which terminates the actor's root facet within the

current turn, ensuring that the actor's exit_status is definitely set

by the time the turn has committed.

This is necessary to avoid a racy panic in supervision: before this

change, an asynchronous SystemMessage::Release was sent when the last

facet of an actor was stopped. Depending on load (!), any retractions

resulting from the shutdown would be delivered before the Release

arrived at the stopping actor. The supervision logic expected

exit_status to be definitely set by the time release() fired, which

wasn't always true. Now that in-turn shutdown has been implemented,

this is a reliable invariant.

A knock-on change is the need to remove

enqueue_for_myself_at_commit(), replacing it with a use of

pending.for_myself.push(). The old enqueue_for_myself_at_commit

approach could lead to lost actions as follows:

A: start linked task T, which spawns a new tokio coroutine

T: activate some facet in A and terminate A's root facet

T: at this point, A transitions to "not running"

A: spawn B, enqueuing a call to B's boot()

A: commit turn. Deliveries for others go out as usual,

but those for A will be discarded since A is "not running".

This means that the call to B's boot() goes missing.

Using pending.for_myself.push() instead assures that B's boot will

always run at the end of A's turn, without regard for whether A is in

some terminated state.

I think that this kind of race could have happened before, but

something about switching away from shutdown() seems to trigger it

somewhat reliably.

|

||

|---|---|---|

| dev-scripts | ||

| syndicate | ||

| syndicate-macros | ||

| syndicate-server | ||

| .gitignore | ||

| Cargo.lock | ||

| Cargo.toml | ||

| Cross.toml | ||

| Makefile | ||

| README.md | ||

| rust-toolchain | ||

| syndicate-rs-server.png | ||

README.md

Syndicate/rs

A Rust implementation of:

-

the Syndicated Actor model, including assertion-based communication, failure-handling, capability-style security, dataspace entities, and facets as a structuring principle;

-

the Syndicate network protocol, including

-

a high-speed Dataspace indexing structure (

skeleton.rs; see also HOWITWORKS.md fromsyndicate-rkt) and -

a standalone Syndicate protocol "broker" service (roughly comparable in scope and intent to D-Bus); and

-

-

a handful of example programs.

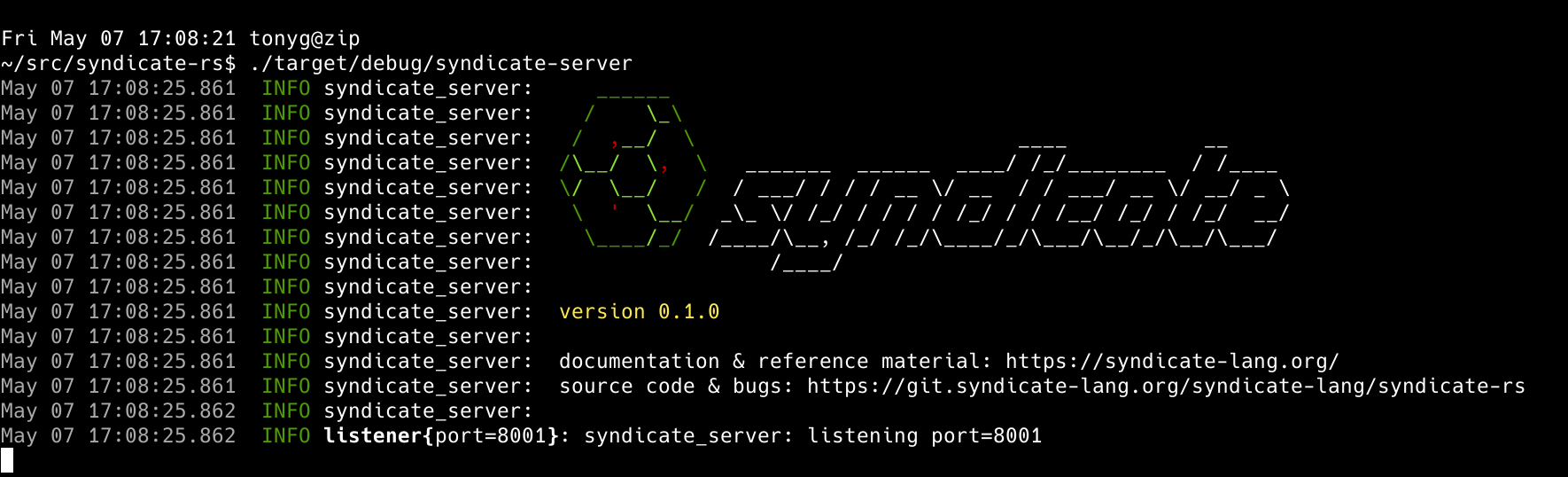

The Syndicate/rs server running.

Quickstart

git clone https://git.syndicate-lang.org/syndicate-lang/syndicate-rs

cd syndicate-rs

cargo build --release

./target/release/syndicate-server -p 8001

Running the examples

In one window, start the server:

./target/release/syndicate-server -p 8001

Then, choose one of the examples below.

Producer/Consumer (sending messages)

In a second window, run a "consumer" process:

./target/release/examples/consumer

Finally, in a third window, run a "producer" process:

./target/release/examples/producer

State producer/consumer (state replication)

Replace producer with state-producer and consumer with

state-consumer, respectively, in the instructions of the previous

subsection to demonstrate Syndicate state replication.

Pingpong example (latency)

In a second window, run

./target/release/examples/pingpong pong

and in a third window, run

./target/release/examples/pingpong ping

The order is important - the difference between ping and pong is

about who kicks off the pingpong session.

Performance note

You may find better performance by restricting the server to fewer cores than you have available. For example, for me, running

taskset -c 0,1 ./target/release/syndicate-server -p 8001

roughly quadruples throughput for a single producer/consumer pair, on my 48-core AMD CPU.